Celebrating 50 Years of Evaluated Nuclear Data

Using recent experimental data and improvements in theory and simulation, a group of national labs, universities, and companies recently published the eighth major update to a library of nuclear reaction information first published in 1968 that is used in simulations for energy, medicine, national security, and other applications

June 1, 2018

enlarge

enlarge

(Left to right) Michal Herman, Alejandro Sonzogni, David Brown, Letty Krejci, Boris Pritychenko, Gustavo Nobre, Joann Totans, Tim Johnson, Elizabeth McCutchan, Ramon Arcilla, and (not pictured) Said Mughabghab of Brookhaven Lab's National Nuclear Data Center contributed to the recent update of the Evaluated Nuclear Data File. First published 50 years ago, this nuclear data library is widely used across the globe in nuclear science and technology applications.

What happens when atomic nuclei collide with other nuclei or subatomic particles? This question is relevant to many applications, from using radioisotopes in medical diagnoses and developing new sources of sustainable energy to dating archeological artifacts, protecting people from radiation, and understanding the origin and future of the universe. However, the physics underlying nuclear reactions is complicated. Neutrons, which are found inside the nucleus of atoms, have no charge, so they easily penetrate matter. When a neutron strikes a nucleus, many different interactions may occur: the neutron could bounce (scatter) off the nucleus, be absorbed by the nucleus to form a larger nucleus (emitting photons in the process), knock nucleons (protons and neutrons) or small clusters of nucleons from the nucleus, or split the nucleus roughly in half (fission). Depending on the energy of the incoming neutron, the type of atomic nuclei, and other conditions, these complex physical interactions can be vastly different. In the case of nuclear fission, hundreds of nucleons in one nucleus rearrange to form two nuclei. No comprehensive theory currently exists to predict such highly complex physical processes. Even more straightforward nuclear reactions rely on detailed nuclear structure and interactions that continue to be the subject of active research, more than 100 years since the discovery of the atomic nucleus.

An animation showing the chain reaction caused by a uranium atom undergoing fission. Nuclear power plants use the energy produced by this process to generate electricity. Credit: Siege Media animation

Because these reactions occur on microscopic scales, physicists can only calculate the probability of a neutron interacting with the nucleus of a target atom—not the certainty. To determine the probability for each of the possible nuclear reactions, physicists conduct experiments in which they direct neutrons of different energies at materials of interest. The measure of this probability is known as the neutron cross section. A detector then records the energy, position, and other information about the reaction products. These experimental data are combined with nuclear model calculations that fill in any measurement gaps. For example, at low energies, cross sections exhibit resonances, or excitations of the compound nucleus—an intermediate, barely bound state formed when the incident particle and target nucleus combine. It is not uncommon at higher energies to no longer be able to experimentally resolve the resonances because they are too close together. Physicists must rely on modeling to estimate the fraction of the cross section lost as a result of these missing resonances.

Scientists conducting experimental and theoretical research in nuclear physics, astrophysics, energy, medicine, and nonproliferation and safety use cross sections to understand how particles decay, absorb energy, and interact. Because cross sections are the key measurable nuclear data needed to understand how nuclear energy systems would operate in the real world, it is imperative that these data are evaluated, tested, and validated—and converted into a usable format. An effort to ensure the availability of such high-quality nuclear data has been going on for the past 50 years.

A history of nuclear data

In the mid-1960s, the Atomic Energy Commission—the predecessor to the U.S. Department of Energy (DOE)—called for the creation of a national library containing nuclear reaction data for modeling nuclear power generation. This database was intended as a reference standard to replace the many individual nuclear data libraries that existed at various institutions around the nation and world. A collaboration of national labs and companies in the United States and Canada was established to create the library, and in 1968, this Cross Section Evaluation Working Group released the first Evaluated Nuclear Data File (ENDF) library: ENDF/B, with the “B” referring to the production version (ENDF/A was the development version).

The Brookhaven Graphite Research Reactor, which operated from 1950 through 1968, was the Laboratory's first major facility and the nation's first reactor built for peacetime atomic research.

Since that time, the National Nuclear Data Center (NNDC)—established in 1952 at DOE’s Brookhaven National Laboratory—has maintained the ENDF library and chaired the CSEWG, which reviews and periodically updates the library. ENDF is one of several products released by the NNDC-coordinated U.S. Nuclear Data Program, which provides the most current and accurate nuclear data for nuclear science and engineering applications.

“When CSEWG was first established, Brookhaven Lab had been collecting nuclear data for some time, as it started as a reactor physics lab in 1947,” said physicist David Brown, who manages the ENDF library at NNDC. “The Neutron Cross Section Compilation Group was established in Brookhaven’s Physics Department in 1952, and three years later, this group published a reference book of neutron cross-section plots known as BNL-325.”

Over the years, different subgroups have formed to handle specific parts of the data, and CSEWG membership has changed to represent various organizations from government, academia, and industry. Core members have included DOE’s Brookhaven, Lawrence Livermore, Los Alamos, and Oak Ridge national labs; the Naval Nuclear Laboratory; and the National Institute of Standards and Technology.

Toward a more complete and accurate library

The data that the CSEWG ultimately adds to the ENDF library are based on the best available experimental results and nuclear model calculations. A significant amount of work goes into selecting the best averages of experimental measurements, adjusting the models to agree with those results, and estimating uncertainty. The evaluated data are put through several checkpoints to assess their performance, including simple formatting and basic physics checks, and more involved testing. One example of such testing is benchmarking against experiments on well-studied systems, running simulations with the same computer codes that physicists and engineers routinely use for basic research and applied technology. This data testing and validation is critical to ensuring the reliability and safety of nuclear energy systems.

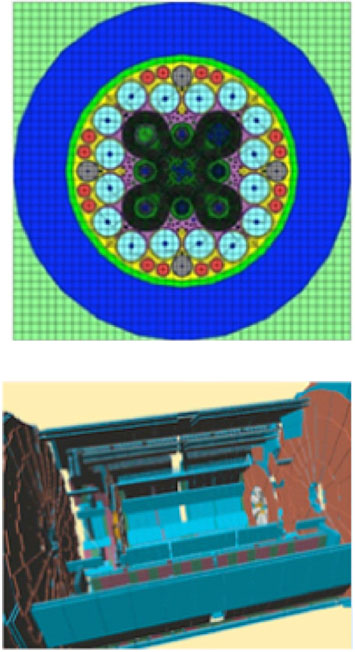

A simulation of the Zero-Power Reactor (ZPR-6 Assembly 6a) experiment, run by Argonne's neutron transport code, UNIC, which was intended to reduce uncertainties and biases in reactor design calculations. UNIC has since been replaced by successor codes, which also use the ENDF library. Credit: Argonne National Lab

“ENDF gets used any time someone simulates something with the word “nuclear” in it,” said Brown. “It contains all the information you would want to know if you direct a neutron at any material, as well as other kinds of data, including for charged particle–induced reactions and radionuclide decay.”

enlarge

enlarge

Top: SCALE, a nuclear simulation code system developed by Oak Ridge National Laboratory, was used to create a model of the Advanced Test Reactor at Idaho National Laboratory. Bottom: The Geant4 model of ATLAS—a particle detector at CERN in Switzerland—shows the tracking of elementary particles called muons. Geant4 was developed as a tool to simulate particle transport, and is used in high-energy, nuclear, and accelerator physics, and in medical and space science.

In particular, the ENDF is the main nuclear data library used by particle transport codes to simulate what happens when a neutron or other particle is transported through a material—Does it get scattered or absorbed? Is fission or a different type of reaction induced? What are the energies of emitted particles?

In early February, the CSEWG published the eighth major release of the ENDF library (ENDF/B-VIII.0). This publication coincided with the 50th anniversary of the ENDF library, and ENDF/B-VIII.0 contains both new and improved data.

One important change is the incorporation of new neutron data standards. These reference standards are nuclear reaction cross sections that scientists measure at the same time they measure the cross section of a material of interest. Assuming that the measured and reference values match, many sources of error can be eliminated.

“Experimenters can simplify their measurements if they measure relative to a standard,” said NNDC director and CSEWG chair Alejandro Sonzogni. “For example, gold-197 is ideal from a chemical standpoint. It is gold’s only naturally occurring isotope, does not interact with many things, and is very malleable. When exposed to a neutron beam, it becomes gold-198, an isotope whose decay characteristics are very well known.”

enlarge

enlarge

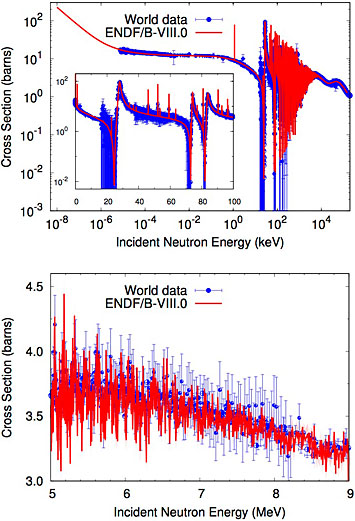

Neutron cross-section plots of iron-56, with blue representing world data and red representing data from the latest ENDF library release. The top plot shows the total cross section across the low-energy energy range; a typical view of the neutron resonances (peaks) is seen in the inset. The bottom plot shows part of the high-energy region of the same cross section. A comparison of the plots reveals that at lower energies, the fluctuations are spaced further apart and individual resonances are resolved; at higher energies, the oscillations are much narrower and often overlap, making it impossible to distinguish one peak from another. Scientists use computational models to describe this "unresolved" resonance region.

The release also contains improved scattering data for thermal neutrons (relatively slow, low-energy neutrons that have the same average energy as surrounding atoms, as determined by temperature) and adds new data from the Coordinated International Evaluated Library Organization (CIELO) for neutron reactions on several important isotopes: oxygen-16, iron-56, uranium-235 and -238, and plutonium-239. CIELO is a pilot project to provide more accurate cross sections, energy spectra, and angular distributions for fission, fusion, and other nuclear applications. Other advances include updated data for light nuclei (such as carbon and hydrogen), structural materials, and charged-particle reactions.

Recent experimental data obtained by scientists in the United States and Europe, as well as improvements in theory and simulation, made these updates possible. The last major release (ENDF/B-VII.0) occurred in 2006.

According to Sonzogni, putting together an evaluation is a challenging task: “Neutrons are not charged so they can interact with nuclei at any low energy, unlike, for example, positively charged protons, which need to overcome repulsive forces. As a result, we need to consider every possible interaction that can happen from zero energy all the way up to 20-million electron volts.”

For the latest release, the Brookhaven team focused on iron-56. Iron is one of the most common elements in structural materials used in nuclear technology. Though nuclear data for iron is not considered as high priority a need as nuclear data for nuclear fuels such as uranium or plutonium, it is important to designing nuclear equipment operating in radiation environments, including those of reactors and particle colliders.

“We had to evaluate thousands of datasets and did a lot of detective work to find missing data,” said Brown, who collaborated with NNDC colleagues Ramon Arcilla, Michal Herman, Said Mughabghab, and Gustavo Nobre. “For example, we needed better angular distribution data. It took quite a bit of work to track down one particular dataset because it was never formally published—it was only published in conference proceedings. When we found it, we discovered that several detectors used in the experiment showed a discontinuity as a function of incident energy. We had to reverse-engineer the experiment in order to correct this issue. Even then, the data only helped for certain angles, and we had to fill in the remaining angles through judicious fitting and modeling.”

After producing the data tables, they tested the data by simulating shielding experiments performed in the 1970s by the Soviet Union and in the 1980s by Lawrence Livermore National Laboratory. In these experiments, scientists placed neutron sources inside a ball of material such as iron, and then measured the spectra of transmitted neutrons and photons.

enlarge

enlarge

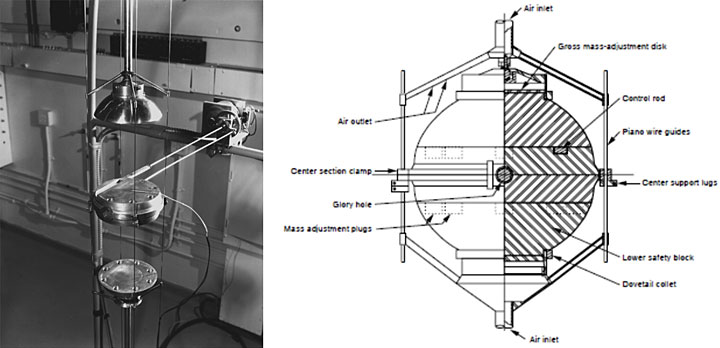

In the mid-1950s, Los Alamos Scientific (now National) Laboratory built and operated an experimental reactor assembly called Jezebel, seen above in its disassembled configuration (left). This assembly was constructed with a bare sphere of plutonium-239 metal. A schematic of the assembly is shown on the right. Over several years, scientists performed hundreds of benchmarking experiments with Jezebel to validate and improve evaluated nuclear data and calculations obtained through computational methods. Credit: Los Alamos National Laboratory

The Brookhaven team members also simulated the performance of small zero-power nuclear reactors—reactors that do not generate any power and are used to test different reactor configurations before building a full system. Through these simulations, they discovered that the ratio of the number of neutrons produced to the number lost or leaked strongly depended on the presence of several products beyond the one of interest, including the minor components of steel, which not only contains iron but also chromium, nickel, and other elements. As a result, they had to do several evaluations instead of one. Other CSEWG organizations faced similar scenarios with their respective materials of interest.

“The validation performed by the CSEWG showed that ENDF/B-VIII.0 is the best-performing and highest-quality library yet,” said Brown.

Los Alamos National Lab recognized the ENDF/B-VIII.0 effort with a challenge coin, given to projects that significantly advance nuclear science.

The future of evaluated nuclear data

enlarge

enlarge

Los Alamos National Lab recognized the ENDF/B-VIII.0 effort with a challenge coin. The back face of the coin features theoretical physicist J. Robert Oppenheimer, who served as director of the Lab during the development of the first atomic bomb.

The latest release has been well received so far, and more feedback is expected over the next couple of years. The International Conference on Nuclear Data for Science and Technology, to be hosted in China in 2019, will provide an opportunity for the CSEWG to get extensive feedback from scientists and engineers outside the United States.

In the meantime, the organizations that comprise the CSEWG are beginning to prioritize their nuclear data needs. These prioritizations will determine how they will contribute to the next major release, which will likely not happen for at least another decade. Minor updates to the eighth ENDF library will be made as necessary and published as point releases.

“Releasing a major update to the ENDF library is similar to releasing a new operating system,” explained Sonzogni. “There will be problems that need to be resolved and small changes that we will need to make, but we expect this latest version to be around for a while. Each year, we come together as a community to decide if a point release is warranted.”

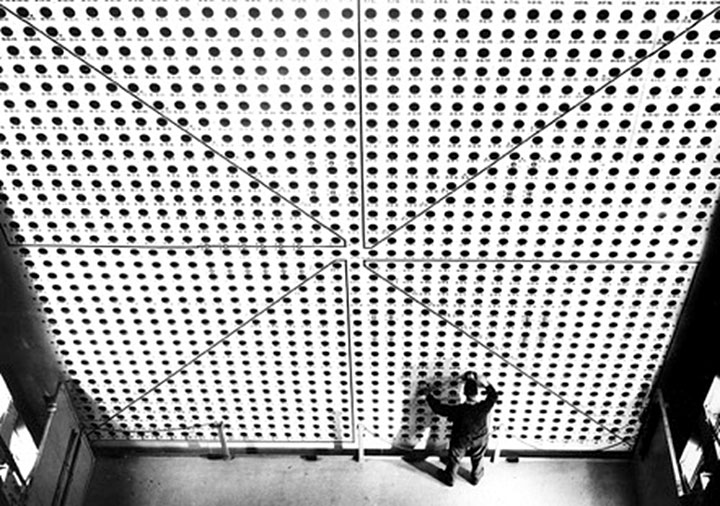

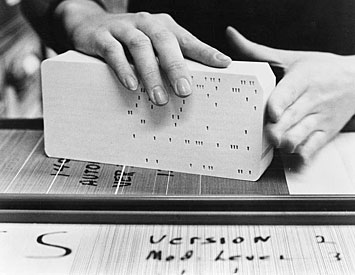

In a complementary effort, the CSEWG is modernizing the data file format, which dates back to the IBM punch cards of the 1960s—sheets of paper that encoded information by the presence or absence of holes in predefined positions. The new structure will be more hierarchical and allow for serialization of the data into both text-based and binary representations for high-performance computing applications.

enlarge

enlarge

The evaluated nuclear data was entered onto punch cards, like the ones seen above. This data storage medium was widely used with early computers until the 1970s. Credit: IBM.

According to Sonzogni, supercomputers will play an important role in enhancing the quality of the data for the next library release: “We will be able to do more sophisticated calculations that were not possible in the past. For example, neutron cross sections allow us to predict if a neutron will hit a particular material, but they do not tell you how accurate that prediction is. Supercomputing makes it possible to use the uncertainties we assign to our evaluations to engineer proper safety margins. Furthermore, by better understanding the deficiencies of the library, we can design experiments, which are very expensive, to target those deficiencies.”

A modernized data format and supercomputing-enabled calculations will only further advance a library that has already come a long way since it was first created in 1968.

“Fifty years is a long time in the scientific world,” said Sonzogni. “The library has greatly evolved through improvements over that time. It will stay around for many more years because it is widely used. Every nuclear engineer in the world relies on the ENDF, and, odds are, anyone running a transport code is using the data, though they may not know it.”

Brookhaven National Laboratory is supported by the Office of Science of the U.S. Department of Energy. The Office of Science is the single largest supporter of basic research in the physical sciences in the United States, and is working to address some of the most pressing challenges of our time. For more information, please visit science.energy.gov.

Follow @BrookhavenLab on Twitter or find us on Facebook.

2018-12873 | INT/EXT | Newsroom