Lighting the Way to Centralized Computing Support for Photon Science

Brookhaven Lab's Computational Science Initiative hosted a workshop for scientists and information technology specialists to discuss best practices for managing and processing data generated at light source facilities

December 18, 2018

enlarge

enlarge

On Sept. 24, scientists and information technology specialists from various labs in the United States and Europe participated in a full-day workshop—hosted by the Scientific Data and Computing Center at Brookhaven Lab—to share challenges and solutions to providing centralized computing support for photon science. From left to right, seated: Eric Lancon, Ian Collier, Kevin Casella, Jamal Irving, Tony Wong, and Abe Singer. Standing: Yee-Ting Li, Shigeki Misawa, Amedeo Perazzo, David Yu, Hironori Ito, Krishna Muriki, Alex Zaytsev, John DeStefano, Stuart Campbell, Martin Gasthuber, Andrew Richards, and Wei Yang.

Large particle accelerator–based facilities known as synchrotron light sources provide intense, highly focused photon beams in the infrared, visible, ultraviolet, and x-ray regions of the electromagnetic spectrum. The photons, or tiny bundles of light energy, can be used to probe the structure, chemical composition, and properties of a wide range of materials on the atomic scale. For example, scientists direct the brilliant light at batteries to resolve charge and discharge processes, at protein-drug complexes to understand how the molecules bind, and at soil samples to identify environmental contaminants.

As these facilities continue to become more advanced through upgrades to light sources, detectors, optics, and other technologies, they are producing data at a higher rate and with increasing complexity. These big data present a challenge to facility users, who have to be able to quickly analyze the data in real time to make sure their experiments are functioning as they should be. Once they have concluded their experiments, users also need ways to store, retrieve, and distribute the data for further analysis. High-performance computing hardware and software are critical to supporting such immediate analysis and post-acquisition requirements.

The U.S. Department of Energy’s (DOE) Brookhaven National Laboratory hosted a one-day workshop on Sept. 24 for information technology (IT) specialists and scientists from various labs around the world to discuss best practices and share experiences in providing centralized computing support to photon science. Many institutions provide limited computing resources (e.g., servers, disk/tape storage systems) within their respective light source facilities for data acquisition and a quick check and feedback on the quality of the collected data. Though these facilities have computing infrastructure (e.g., login access, network connectivity, data management software) to support usage, access to computing resources is often time-constrained because of the high number and frequency of experiments being conducted at any given time. For example, the Diamond Light Source in the United Kingdom hosts about 9,000 experiments in a single year. Because of the limited computing resources, extensive (or multiple attempts at) data reconstruction and analysis must typically be performed outside of the facilities. But centralized computing centers can provide the resources needed to manage and process data being generated by such experiments.

Continuing a legacy of computing support

Brookhaven Lab is home to the National Synchrotron Light Source II (NSLS-II), a DOE Office of Science User Facility, that began operating in 2014 and is 10,000 times brighter than the original NSLS. Currently, 28 beamlines are in operation or commissioning and one beamline is under construction, and there is space to accommodate an additional 30 beamlines. NSLS-II is expected to generate tens of petabytes of data (one petabyte is equivalent to a stack of CDs standing nearly 10,000 feet tall) per year in the next decade.

Brookhaven is also home to the Scientific Data and Computing Center (SDCC), part of the Computational Science Initiative (CSI). The centralized data storage, computing, and networking infrastructure that SDCC provides has historically supported the RHIC and ATLAS Computing Facility (RACF). This facility provides the necessary resources to store, process, analyze, and distribute experimental data from the Relativistic Heavy Ion Collider (RHIC)—another DOE Office of Science User Facility at Brookhaven—and the ATLAS detector at CERN’s Large Hadron Collider in Europe.

enlarge

enlarge

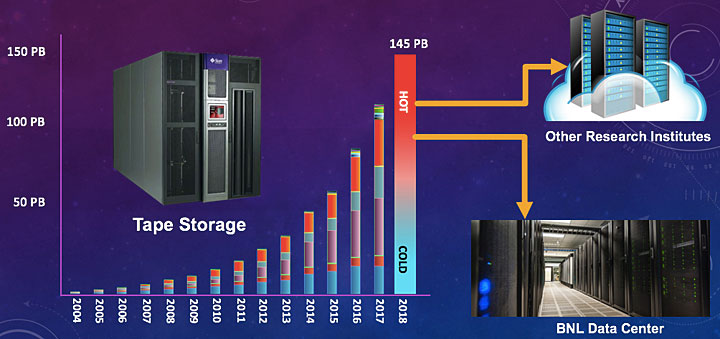

The amount of data that need to be archived and retrieved from tape storage has significantly increased over the past decade, as seen in the above graph. "Hot" storage refers to storing data that are frequently accessed, while "cold" storage refers to storing data that are rarely used.

“Brookhaven has a long tradition of providing centralized computing support to the nuclear and high-energy physics communities,” said workshop organizer Tony Wong, deputy director of SDCC. “A standard approach for dealing with their computing requirements has been developed for more than 50 years. New and advanced photon science facilities such as NSLS-II have very different requirements, and therefore we need to reconsider our approach. The purpose of the workshop was to gain insights from labs with a proven track record of providing centralized computing support for photon science, and to apply those insights at SDCC and other centralized computing centers. There are a lot of research organizations around the world who are similar to Brookhaven in the sense that they have a long history in data-intensive nuclear and high-energy physics experiments and are now branching out to newer data-intensive areas, such as photon science.”

Nearly 30 scientists and IT specialists from several DOE national laboratories—Brookhaven, Argonne, Lawrence Berkeley, and SLAC—and research institutions in Europe, including the Diamond Light Source and Science and Technology Facilities Council in the United Kingdom and the PETRA III x-ray light source at the Deutsches Elektronen-Synchrotron (DESY) in Germany, participated in this first-of-its-kind workshop. They discussed common challenges in storing, archiving, retrieving, sharing, and analyzing photon science data, and techniques to overcome these challenges.

Meeting different computing requirements

One of the biggest differences in computing requirements between nuclear and high-energy physics and photon science is the speed with which the data must be analyzed upon collection.

“In nuclear and high-energy physics, the data-taking period spans weeks, months, or even years, and the data are analyzed at a later date,” said Wong. “But in photon science, experiments sometimes only last a few hours to a couple of days. When your time at a beamline is this limited, every second counts. Therefore, it is vitally important for the users to be able to immediately check their data as it is collected to ensure it is of value. It is through these data checks that scientists can confirm whether the detectors and instruments are working properly.”

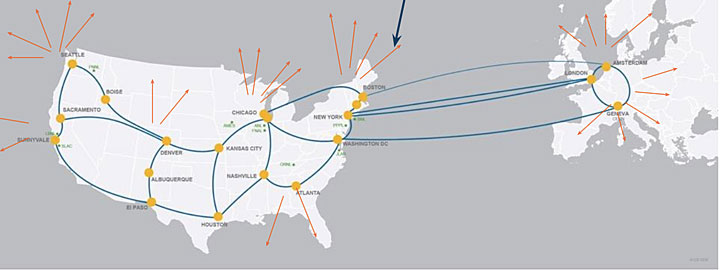

Photon science also has unique networking requirements, both internally within the light sources and central computing centers, and externally across the internet and remote facilities. For example, in the past, scientists could load their experimental results onto portable storage devices such as removable drives. However, because of the proliferation of big data, this take-it-home approach is often not feasible. Instead, scientists are investigating cloud-based data storage and distribution technology. While the DOE-supported Energy Sciences Network (ESnet)—a DOE Office of Science User Facility stewarded by Lawrence Berkeley National Laboratory—provides high-bandwidth connections for national labs, universities, and research institutions to share their data, no such vehicle exists for private companies. Additionally, sending, storing, and accessing data over the internet can pose security concerns in cases where the data are proprietary or involve confidential information, such as corporate entities.

Even nonproprietary academic research requires that some security measures are in place to ensure that the appropriate personnel are accessing the computing resources and data. The workshop participants discussed authentication and authorization infrastructure and mechanisms to address these concerns.

enlarge

enlarge

ESnet provides network connections across the world to enable sharing of big data for scientific discovery.

Identifying opportunities and challenges

According to Wong, the workshop raised both concern and optimism. Many of the world’s light sources will be undergoing upgrades between 2020 and 2025 that will increase today’s data collection rates by three to 10 times.

“If we are having trouble coping with data challenges today, even taking into account advancements in technology, we will continue to have problems in the future with respect to moving data from detectors to storage and performing real-time analysis on the data,” said Wong. “On the other hand, SDCC has extensive experience in providing software visualization, cloud computing, authentication and authorization, scalable disk storage, and other infrastructure for nuclear and high-energy physics research. This experience can be leveraged to tackle the unique challenges of managing and processing data for photon science.”

Going forward, SDCC will continue to engage with the larger community of IT experts in scientific computing through existing information-exchange forums, such as HEPiX. Established in 1991, HEPiX comprises more than 500 scientists and IT system administrators, engineers, and managers who meet twice a year to discuss scientific computing and data challenges in nuclear and high-energy physics. Recently, HEPiX has been extending these discussions to other scientific areas, with scientists and IT professionals from various light sources in attendance. Several of the Brookhaven workshop participants attended the recent HEPiX Autumn/Fall 2018 Workshop in Barcelona, Spain.

“The seeds have already been planted for interactions between the two communities,” said Wong. “It is our hope that the exchange of information will be mutually beneficial.”

With this knowledge sharing, SDCC hopes to expand the amount of support provided to NSLS-II, as well as the Center for Functional Nanomaterials (CFN)—another DOE Office of Science User Facility at Brookhaven. In fact, several scientists from NSLS-II and CFN attended the workshop, providing a comprehensive view of their computing needs.

“SDCC already supports these user facilities but we would like to make this support more encompassing,” said Wong. “For instance, we provide offline computing resources for post-data acquisition analysis but we are not yet providing a real-time data quality IT infrastructure. Events like this workshop are part of SDCC’s larger ongoing effort to provide adequate computing support to scientists, enabling them to carry out the world-class research that leads to scientific discoveries.”

Brookhaven National Laboratory is supported by the Office of Science of the U.S. Department of Energy. The Office of Science is the single largest supporter of basic research in the physical sciences in the United States, and is working to address some of the most pressing challenges of our time. For more information, please visit science.energy.gov.

Follow @BrookhavenLab on Twitter or find us on Facebook.

2018-13160 | INT/EXT | Newsroom